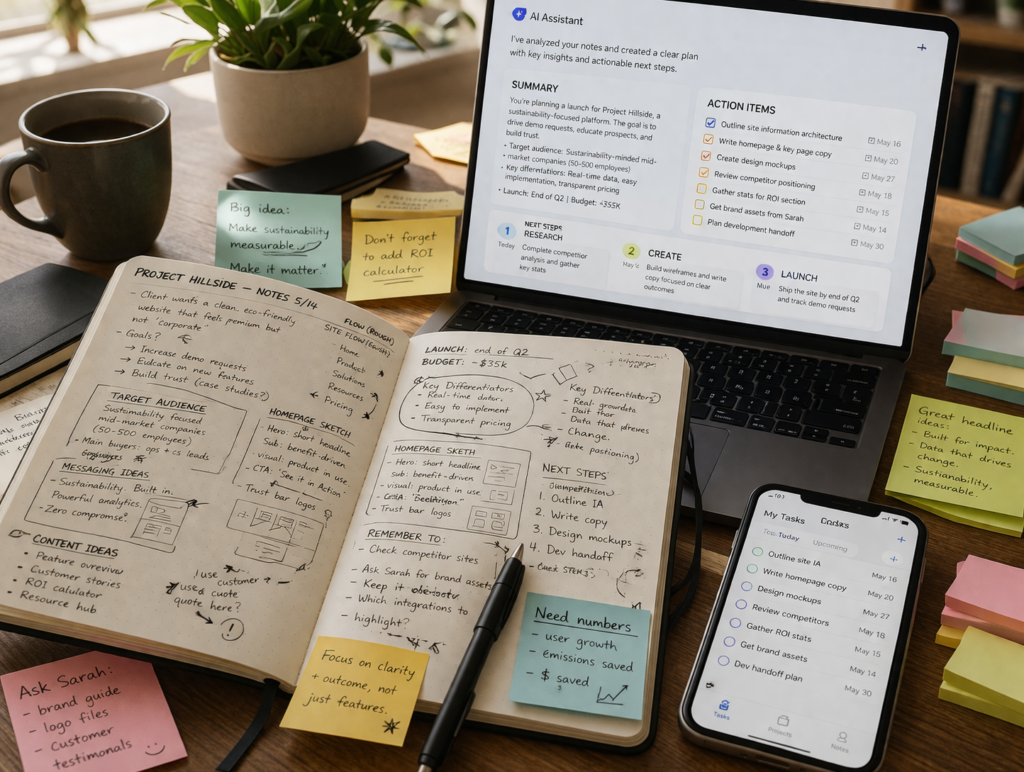

What you capture in the moment is messy by nature. What you do with it afterwards is where the real work begins.

There is a moment, usually late in the day, when you open your notes and realise they are no longer helping you.

At the time, they felt useful. You captured everything. Decisions, ideas, side comments, names. You kept up with the pace of the conversation. You did what a good consultant does in a fast-moving room.

But now, looking at them with a different objective, they resist interpretation. A key decision is buried halfway through a paragraph. An action is implied but never stated. A name sits next to a sentence, but whether that implies ownership or just contribution is unclear. You can reconstruct what happened, but only by rethinking the entire conversation.

This is the quiet inefficiency that sits underneath a lot of otherwise competent work. Not in how information is captured, but in how it is converted. Notes are treated as an end point, when in practice they are only the beginning of a second, more important task: turning what was said into what will be done.

Most teams attempt to solve this by writing better notes. More structure. Cleaner formatting. Clearer headings. It helps, but only marginally. The problem is not that the notes are messy. The problem is that they are being asked to do something they were never designed to do.

AI does not fix the notes. It removes the need for them to be fixed.

Start with the outcome, not the content

The instinct, when faced with raw notes, is to improve them. To organise, summarise, and bring them into a shape that feels more usable. It is a reasonable instinct, and almost always the wrong place to start.

Notes are input. What matters is the output.

The difficulty is that most people never define that output explicitly. They move directly from capturing information to trying to clean it, without deciding what the cleaned version is meant to become. As a result, the transformation remains shallow. The notes become neater, but not more useful.

A more deliberate approach begins by asking a different question: what must exist after these notes are processed?

Not what should be clearer. What should exist.

A list of actions with owners and timelines is a different artefact from a decision log. A prioritised backlog requires different interpretation again. Each of these imposes a different structure on the same underlying material.

When that structure is defined in advance, the role of AI becomes precise. It is no longer being asked to tidy information. It is being asked to reshape it toward a known form.

In practice, this is where tools like ChatGPT or Claude start to behave less like “AI assistants” and more like junior team members. Give them a vague instruction and they produce something passable. Give them a clearly defined outcome and they produce something you can actually use.

Stop treating notes as something to fix

There is a persistent belief that the quality of the output depends on the quality of the notes. That if the notes are messy, the result will be unreliable.

In manual workflows, that is largely true. With AI, it is less so.

Raw notes contain signals that structured documents often remove. Repetition reveals emphasis. Interruptions indicate disagreement or uncertainty. Informal phrasing captures intent in a way polished language sometimes obscures. These are not flaws. They are context.

When notes are heavily edited before being processed, much of that context is lost. What remains is cleaner, but also flatter.

This is why many consultants are quietly shifting toward recording-first workflows using tools like Otter.ai or Fireflies.ai. Instead of trying to write perfect notes in real time, they capture everything and rely on AI afterwards to interpret and structure it.

The trade-off is intentional: capture speed over capture quality, then apply structure later when it actually matters.

Move from summarising to deciding

The most common way people use AI with notes is to ask for a summary. It feels productive. It is also where most of the value is lost.

A summary tells you what happened. It rarely tells you what happens next.

The gap between those two is where execution either accelerates or stalls. Meetings generate momentum in conversation, but that momentum only carries forward if it is translated into clear actions.

AI is most useful not as a summariser, but as a decision extractor.

Every set of notes contains decisions that were made, actions that were implied, and responsibilities that were suggested but never formalised. These elements are often embedded in language that does not follow a consistent pattern.

A well-structured prompt asks the model to surface these moments and treat them as commitments. To convert suggestion into action, implication into ownership, and discussion into something that can be tracked.

And this is where the workflow becomes tangible. The output should not live as a paragraph in a document. It should become something you can act on. Whether that is a task database in Notion or a structured task list in ClickUp, the goal is the same: move from interpretation to execution without another layer of manual translation.

Design the prompt like a piece of work

There is a tendency to treat prompts as casual instructions. A sentence or two, written quickly, with the expectation that the model will infer the rest.

For simple tasks, that is sufficient. For transformations that involve interpretation, it is not.

Turning notes into an action list requires a sequence of judgements. Identifying what constitutes an action. Interpreting who owns it. Estimating what a reasonable timeline might be. Deciding how to structure the result so it can be used immediately.

When these steps are not made explicit, the model fills the gaps with assumptions.

A more reliable approach is to treat the prompt as a lightweight piece of design. Provide context. Define the objective. Break down the steps. Specify the format.

At this point, some teams take it one step further and automate the entire flow. For example, using Zapier or Make to send processed outputs directly into their task management system. It removes the final bit of friction, which is often where even good workflows fall apart.

Review the output as if it matters

AI outputs are easy to trust. They are structured, articulate, and delivered with confidence.

That combination creates the impression of completeness.

It is an impression worth resisting.

The output should be treated as a first pass. A strong one, but still a draft. Not because the model is unreliable, but because it does not have full context.

A disciplined review focuses on whether actions have been captured accurately, whether ownership reflects reality, and whether timelines are meaningful.

This step is where judgement re-enters the process. AI accelerates the work. It does not replace responsibility for it.

Close the gap between clarity and execution

The final step is rarely discussed because it feels operational rather than strategic. It is also where most of the value is realised.

Once the actions are clear, they need to move into a system where they can be acted on.

Leaving them in a document, no matter how well structured, creates a false sense of completion. The clarity exists, but it is not connected to execution.

The shift happens when the output becomes part of an active workflow. Tasks are assigned. Timelines are visible. Progress can be tracked without returning to the original notes.

At that point, the process becomes repeatable. Capture, transform, execute. Not perfectly, but consistently. And consistency, more than precision, is what most teams are missing.

Key takeaway

Raw notes are not meant to be clear. They are meant to be fast. The problem is not their lack of structure. It is what happens when that lack of structure is carried forward into execution. AI changes that moment. Not by making notes better, but by making them sufficient. By turning incomplete input into complete outputs. By converting what was said into what will be done, while the context is still fresh enough to be accurate.

The difference is small in effort and disproportionate in impact. And once that gap is closed, it becomes difficult to justify going back to doing it manually.

References

Make. (2024). Automation fundamentals: designing workflows that scale.

https://www.make.com/en/help

OpenAI. (2024). Using ChatGPT for structured task extraction and summarisation.

https://platform.openai.com/docs/guides/prompt-engineering

Anthropic. (2024). Prompt design for structured outputs with Claude.

https://docs.anthropic.com/claude/docs/prompt-engineering

Otter.ai. (2024). AI meeting notes: How transcription tools improve productivity.

https://otter.ai/blog/ai-meeting-notes

Fireflies.ai. (2024). How to turn meeting conversations into actionable insights.

https://fireflies.ai/blog/ai-meeting-notes

Notion. (2025). Using AI to turn notes into tasks and workflows.

https://www.notion.so/help/guides/category/ai

ClickUp. (2025). How to convert meeting notes into actionable tasks.

https://clickup.com/blog/meeting-notes/

Zapier. (2024). How to automate workflows between apps (with examples).

https://zapier.com/blog/what-is-zapier/

Recommended Articles

How to Effortlessly Organize Project Notes Across Accounts